From the source material

1 / 1

Talkie’s research hook is not nostalgia. It is whether a model trained before these inventions can reason its way toward the future. (Image: Talkie LM)

Alec Radford is back in the language model news, but not with a bigger chatbot chasing today's internet. He, Nick Levine, and David Duvenaud have introduced talkie-1930-13b-base, a 13B language model trained on 260 billion tokens of pre-1931 English text, plus an instruction-tuned version meant to behave like a conversation partner from roughly that world. It is charming. It is also a very practical warning label for the rest of AI.

The obvious hook is fun: ask the model about a future it was never supposed to see. Could it infer ideas that arrived after its cutoff? Could it learn Python from a few examples even though digital computers were not in its training distribution? Could it treat the twentieth century as an out-of-sample forecasting benchmark instead of as trivia already scraped into the soup?

Fine. Time-machine chatbot. Cue the sepia filter.

The useful part is much less cute. Talkie is an attempt to build a cleaner experimental instrument. Modern models are trained, distilled, tuned, and benchmarked in a data ecosystem so tangled that almost every capability claim comes with a contamination asterisk. Did the model generalize, or did some version of the answer leak into training? Did it learn reasoning, or did it learn the internet's answer-shaped residue? A model deliberately trained before 1931 gives researchers a sharper knife: many later events, inventions, programming languages, and social facts are supposed to be genuinely unavailable.

That is why this is relevant beyond AI-history people and model collectors. If you build with LLMs, you are already living inside the data provenance problem. You rely on benchmarks, evals, model cards, and vendor claims to decide what to trust. Talkie makes that problem visible by choosing an extreme cutoff and then showing how hard it still is to keep the future out.

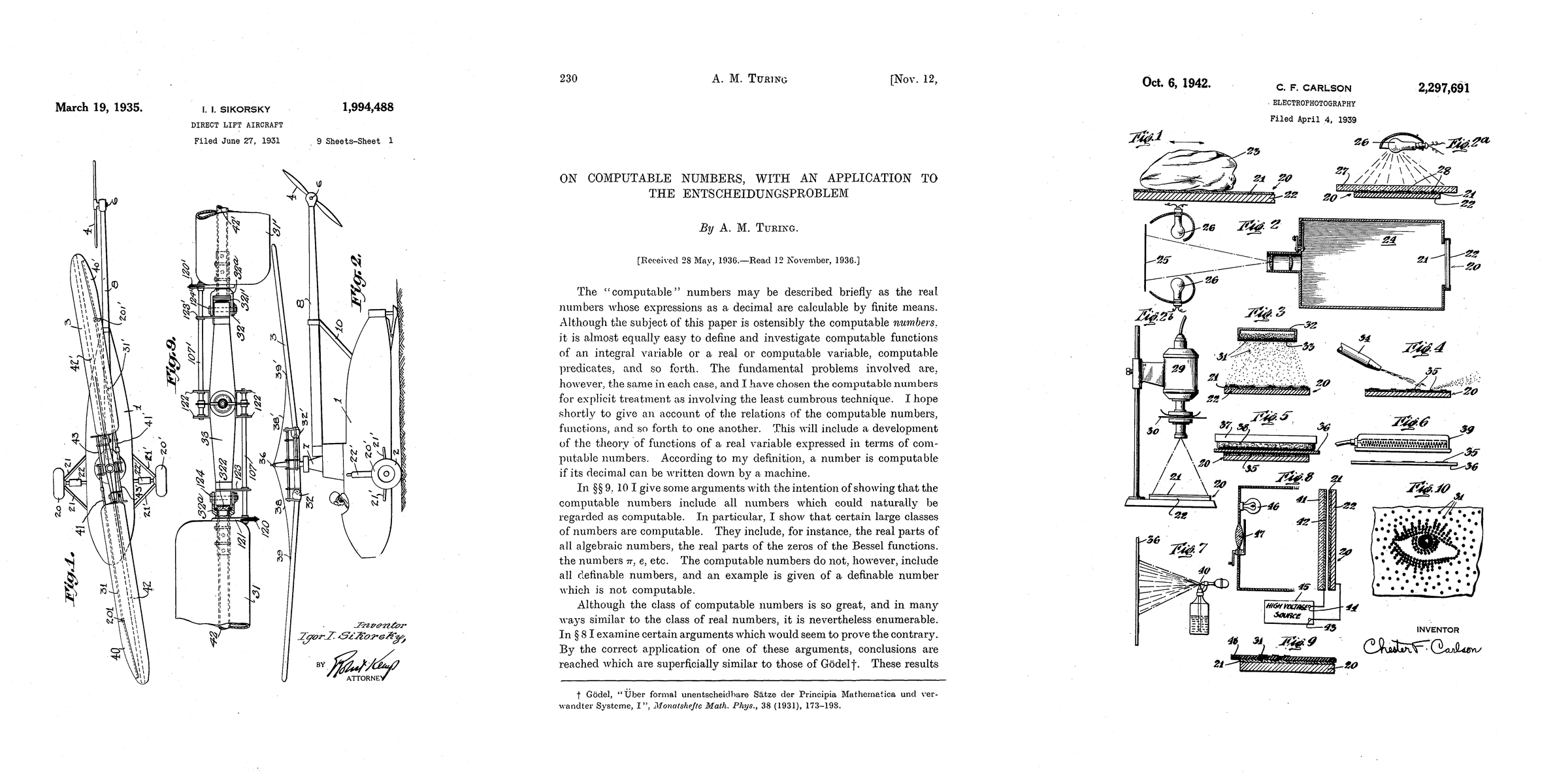

The team says the base model was trained from historical books, newspapers, periodicals, scientific journals, patents, and case law, using 1930 as the cutoff because works before then have entered the public domain in the United States. The model weights are available through the Talkie Hugging Face collection, and the model metadata lists Apache 2.0 licensing for both the base and instruction-tuned checkpoints. Translation: this is not just a paper toy. Researchers and sufficiently stubborn local-model people can actually inspect, run, and build around it.

The catch, naturally, is that cleanliness is a target, not a state of grace. The authors are blunt about leakage. Faulty metadata, old scans with modern introductions, editorial notes, and other messy archive artifacts can smuggle post-cutoff facts into what looks like historical data. They say an earlier 7B version knew about Franklin D. Roosevelt's presidency and New Deal legislation, and the 13B model still has some awareness of World War II and the immediate postwar order. The future got in. It always tries.

The second catch is post-training. The base model is the cleaner object. The chat model is more compromised because instruction-following does not appear out of the ether. The Talkie team generated instruction-response examples from historical reference works, then used synthetic prompts and Claude Sonnet 4.6 as a preference judge, followed by rejection-sampled synthetic chats between Claude Opus 4.6 and Talkie. Simon Willison, who flagged the release, called out this same tension: the base model is closer to a fully out-of-copyright, “vegan” model, while the chat model has modern AI fingerprints on it.

That does not make the project less interesting. It makes it honest. A useful AI system is almost never just pretraining weights. It is data selection, filtering, interface expectations, safety tuning, preference optimization, and a pile of invisible taste. Talkie lets us watch that pile form in a place where the anachronisms are easier to spot. When a supposedly 1930-ish model starts speaking in listicles, as the authors say an earlier version did after reinforcement learning, the absurdity is obvious. In ordinary products, the same kind of style contamination just looks like 'the model got better.'

For practical readers, the lesson is not that everyone should go download a 53GB historical model tonight. The lesson is to be more skeptical of clean-sounding labels. 'Open' does not mean auditable. 'Licensed' does not mean uncontaminated. 'Instruction-tuned' does not mean neutral. If your workflow depends on a model having no exposure to a dataset, a customer corpus, a benchmark, a future event, or a licensed work, you need evidence at the pipeline level, not vibes at the model-card level.

Talkie also points toward a more interesting future for domain models. A historical LLM is one example of a deliberately constrained model: not the most capable system overall, but a system trained to make one boundary meaningful. Legal, medical, financial, scientific, and corporate models will need similar discipline if they are going to be trusted for anything more serious than autocomplete with a blazer. The hard work is not only getting more data. It is proving which data did not get in.

So yes, the demo is delightful. Ask a 1930 model for an SVG of a pelican on a bicycle and you may get something wonderfully sideways. But the real story is that Talkie turns model contamination from an abstract footnote into the main event. The next time a vendor says its model has clean training data, this is the question to ask: clean like a press release, or clean like a researcher who knows the future keeps leaking through the archive door?

In short

A 13B model trained on pre-1931 text is less a nostalgia demo than a practical test bed for clean data, synthetic tuning, and what language models really learn from the web.