From the source material

1 / 1

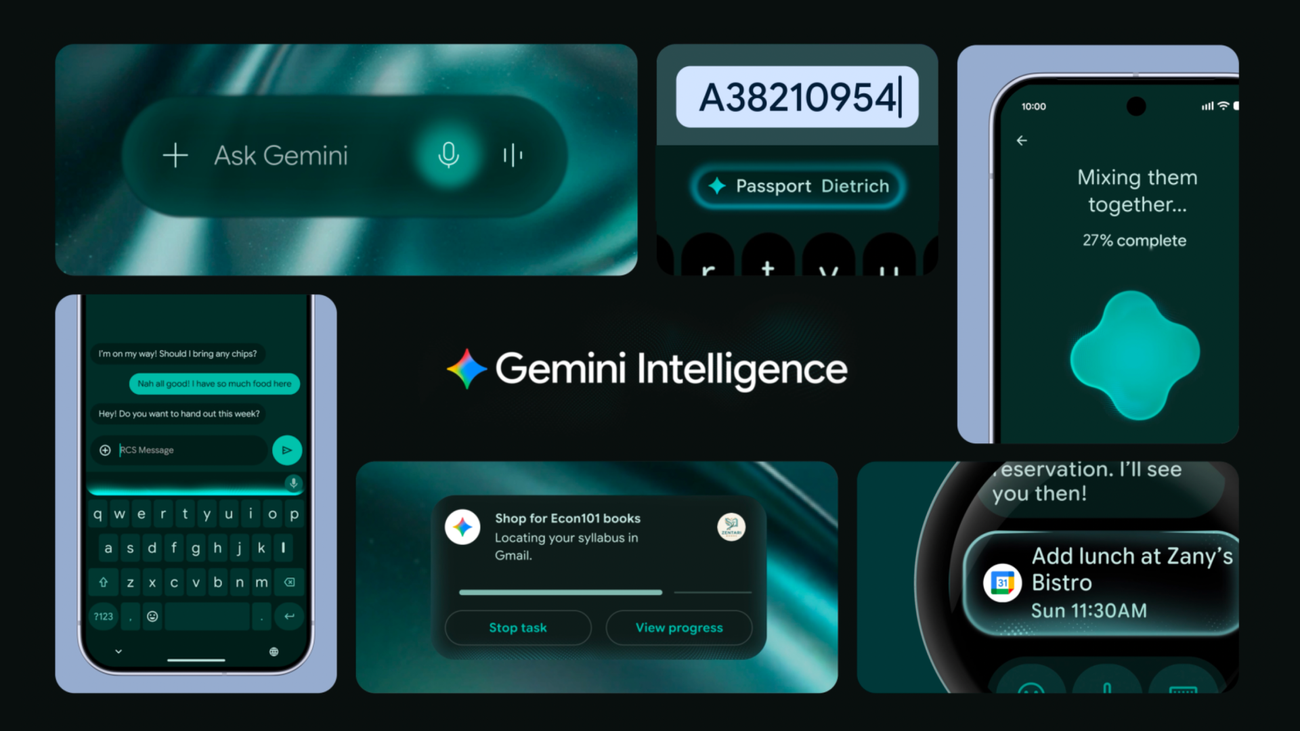

Google says Gemini Intelligence will roll out first to the latest Samsung Galaxy and Google Pixel phones this summer, then expand across Android devices later this year. (Image: Google)

Google is giving Android the agent treatment, and the useful story is not that a phone can now summarize a page or polish a text message. In Google’s Android announcement, the company introduces Gemini Intelligence as a layer that can automate multi-step app tasks, use screen or image context, assist in Chrome, fill forms with connected-app information, clean up spoken dictation, and generate custom widgets from natural language. Translation: Google is trying to move Gemini from an app you open into an operating-system behavior you summon.

That shift matters because phones are where personal AI either becomes useful or becomes ornamental. A chatbot tab is optional. The operating system is not. If Gemini can sit across apps, look at the current screen, understand a photo, work in the background, and return for final confirmation, Android becomes one of the largest distribution surfaces for practical agents. Features are easier to demo than distribution. That does not make distribution less important.

Google’s examples are intentionally ordinary, which is the right choice. Booking a ride, reserving a class bike, finding a syllabus in Gmail and adding required books to a cart, turning a grocery list into a delivery order, or using a travel-brochure photo to search for a group tour are not sci-fi tasks. They are the annoying little crossings between apps where mobile work leaks time. The real story is that Gemini Intelligence is being positioned as the connective tissue between those apps, not as another destination app with a sparkle icon.

The control language is doing a lot of work. Google says Gemini acts only on command, stops when the task is complete, tracks progress through live notifications, and leaves the final confirmation to the user. Good. That is the minimum credible shape for a consumer agent touching shopping, travel, bookings, email, and forms. The hard part will be whether “final confirmation” remains meaningful when users are tired, the screen is small, and the model has already assembled a plausible-looking result. A confirmation button is not governance by itself. It is a checkpoint, and checkpoint quality depends on what the user can inspect before tapping.

Chrome and Autofill make the strategy clearer. Gemini in Chrome is supposed to summarize, compare, research, and eventually handle mundane web tasks like appointment booking or parking reservations. Autofill with Google is supposed to use Gemini’s Personal Intelligence to fill more complex mobile forms using relevant information from connected apps, with the Gemini connection described as opt-in and adjustable in settings. This is not just convenience. It is Google trying to turn identity, browsing, app context, and personal data into a more active assistant layer. Useful, if bounded. Creepy, if the boundary gets mushy.

Rambler is the smaller feature that may be more broadly felt. Speech-to-text is fast, but people do not naturally speak in polished messages. We hedge, restart, repeat, and drift. Google says Rambler will turn natural speech into concise written text, show clearly when it is enabled, transcribe in real time, avoid storing or saving the audio, and handle multilingual messages. If it works, this is the kind of AI feature that disappears into daily behavior. Nobody says they adopted a large language model. They just stop retyping the same voice note three times because the first version sounded like a hostage transcript.

Create My Widget is the generative UI tell. Android widgets have always been a small rebellion against one-size-fits-all app screens. Google is now saying users can describe a widget — high-protein meal prep ideas, cyclist-friendly weather, whatever narrow surface matters — and have Gemini build a functional dashboard. That is a more interesting product direction than “AI answers in a box.” It suggests interfaces assembled around intent instead of shipped only as fixed app furniture. The catch is obvious: generated UI has to be reliable, legible, permission-aware, and boringly honest about where its data comes from. A hallucinated widget is not personalization. It is clutter with confidence.

There is also a hardware politics layer. Google says Gemini Intelligence will start on the latest Samsung Galaxy and Google Pixel phones this summer, then expand across watches, cars, glasses, and laptops later this year. That rollout pattern says plenty. The richest AI features are becoming a way to differentiate premium devices, OEM partnerships, and ecosystem lock-in before they become a general expectation. Android may be open in brand memory, but high-end AI behavior is increasingly about which device has the local compute, sensor access, cloud entitlement, and commercial deal to make the experience feel native.

For Useful Machines readers, the practical test is not whether Gemini Intelligence sounds impressive. It does. The test is whether it can be trusted with the boring middle of life: orders, bookings, forms, tabs, messages, widgets, and context pulled from places you forgot you connected. Watch for five things: explicit opt-ins, clear progress visibility, inspectable final confirmations, easy revocation of app/context access, and failure modes that stop safely instead of improvising. If those are strong, Android gets meaningfully more useful. If they are weak, the phone becomes another place where automation creates work for the human supervisor.

So yes, this is a phone feature launch. But it is also a map of where personal agents are likely to land first: not as separate robot coworkers, but as operating-system muscles stretched across the apps and surfaces people already use. Google’s bet is that Android can become less of a launcher and more of an intelligence system. The dry little knife is that intelligence systems do not earn trust by being proactive. They earn trust by knowing when not to act, showing their work before it matters, and making the stop button easier to find than the magic button.

In short

Google’s Gemini Intelligence turns Android into a proactive agent surface for app automation, Chrome, Autofill, voice cleanup, and custom widgets. The useful question is not whether it demos well. It is where control actually lives.

Keep the signal coming

Useful AI, fewer talking points.

Follow Useful Machines for practical AI news, workflows, tools, and strategy — or get in touch if your product belongs in front of readers who care about useful implementation.